Google has reportedly restricted certain users from accessing its specialised AI coding assistant Antigravity, including some subscribers of Gemini AI Ultra, following what it described as suspicious and malicious usage patterns linked to the open-source coding agent framework OpenClaw.

According to statements shared on X by Varun Mohan, a Google DeepMind engineer and former Windsurf CEO, the company observed a “massive increase in malicious usage of the Antigravity backend,” which significantly degraded service quality for legitimate users.

Policy Violations and Backend Misuse

The affected accounts were reportedly using Gemini AI models through workflows tied to OpenClaw. Google stated that some use cases involved harmful, abusive or unauthorised activities that violated its terms of service.

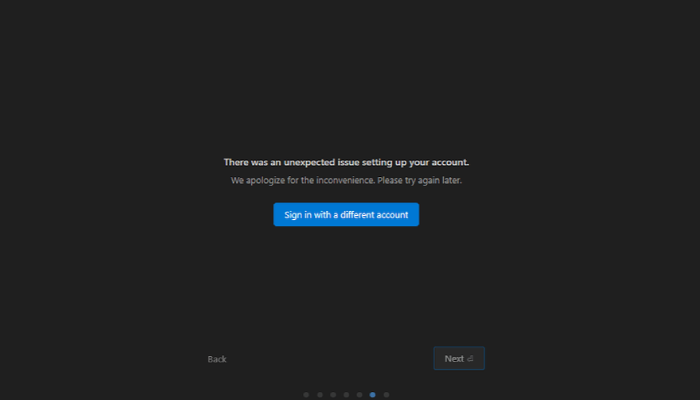

Mohan clarified that the restrictions specifically targeted misuse of the Antigravity backend rather than general Gemini access. “We needed to find a path to quickly shut off access to these users that are not using the product as intended,” he wrote, adding that some impacted users may not have been aware they were breaching terms and could be provided a pathway to reinstatement.

The move has sparked debate among developers and enterprise users, particularly as AI coding assistants increasingly form part of daily workflows in software development, research and business operations. Restricting access to such tools can materially impact productivity and operational continuity.

Criticism from OpenClaw’s Founder

OpenClaw creator Peter Steinberger publicly criticised the action, describing it as “draconian.” In a post on X, he suggested that Google’s approach lacked transparency and engagement compared to competitors.

The situation echoes broader industry tensions around third-party integrations and API usage. Previously, Anthropic updated its consumer terms to prohibit OAuth tokens in external tools, including OpenClaw, citing security and compliance concerns.

Broader AI Ecosystem Implications

OpenClaw, as an open-source AI agent framework, has faced scrutiny over potential security risks associated with autonomous agent behaviour and token handling. Despite the controversy, Steinberger’s technical expertise remains highly regarded. OpenAI recently hired him, with CEO Sam Altman stating that he would help drive the next generation of personal AI agents.

The episode underscores a growing governance challenge in the AI ecosystem: balancing open innovation and third-party integrations with platform security, infrastructure stability and responsible use enforcement.