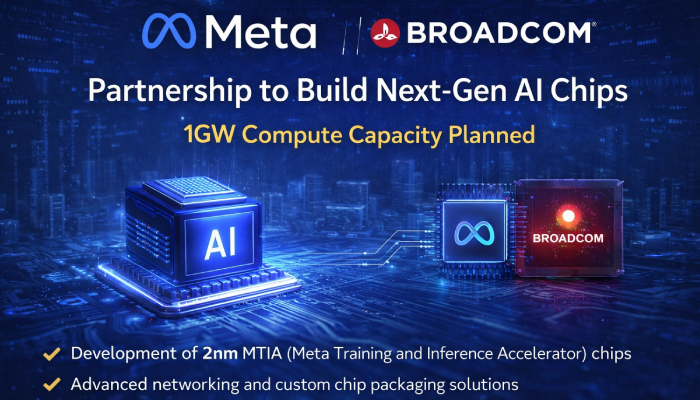

Meta has announced a major partnership with Broadcom to develop next-generation AI chips, marking a significant push toward building large-scale infrastructure for advanced artificial intelligence. The collaboration focuses on Meta’s in-house MTIA (Meta Training and Inference Accelerator) chips, with an initial deployment target of 1 gigawatt (GW) compute capacity.

The agreement will see Broadcom work closely with Meta across chip design, advanced packaging, and high-performance networking systems. This integrated approach is aimed at creating a powerful and efficient computing backbone capable of supporting real-time AI services for billions of users across Meta’s platforms.

Broadcom revealed that the upcoming MTIA chips will be built on 2nm architecture, positioning them among the most advanced AI compute accelerators in the industry. These chips are expected to power a multi-year infrastructure rollout, with Meta planning to scale beyond the initial 1GW capacity into several gigawatts over time.

Meta CEO Mark Zuckerberg highlighted the long-term vision behind the partnership, stating that the collaboration will help build the massive computing foundation required to deliver “personal superintelligence” at a global scale. The chips will play a crucial role in accelerating AI training and inference across Meta’s ecosystem.

The partnership also includes the use of Broadcom’s XPU platform, which enables custom AI chip development tailored to Meta’s specific workloads. Additionally, Broadcom will provide advanced Ethernet-based networking solutions to ensure fast, efficient communication across large AI clusters—critical for handling complex AI tasks at scale.

In a notable leadership update, Hock Tan will step down from Meta’s board of directors and transition into an advisory role, further strengthening strategic alignment between the two companies.

This move underscores Meta’s growing focus on vertical integration in AI hardware, reducing reliance on third-party chipmakers while optimizing performance for its own AI models and services. As competition intensifies in the AI infrastructure space, partnerships like this signal a shift toward custom silicon and massive compute investments to support next-generation AI capabilities.